Here # we the title is given by the text inside title % html_element ( "h2" ) %>% html_text2 ( ) title #> "The Phantom Menace" "Attack of the Clones" #> "Revenge of the Sith" "A New Hope" #> "The Empire Strikes Back" "Return of the Jedi" #> "The Force Awakens" # Or use html_attr() to get data out of attributes. # Then use html_element() to extract one element per film.

#> \nThe Force Awakens\n\n\nReleased: 2015.

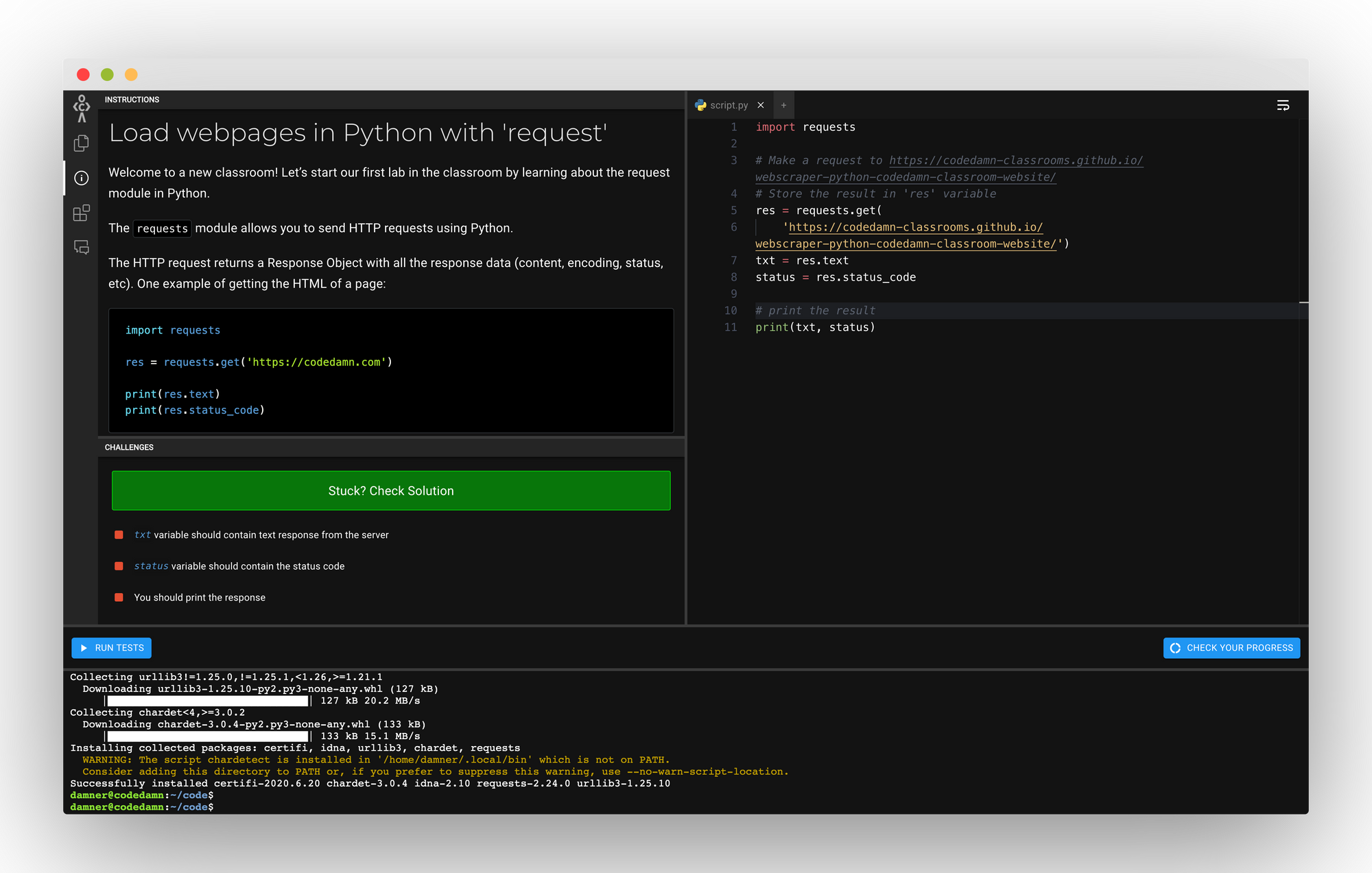

We'll be using Python 3.6, Requests, BeautifulSoup, Asyncio, Pandas, Numpy, and more Speedy, lightweight web scrapper for Shutterstock. The end goal of this course is to scrape blogs to analyze trending keywords and phrases.

BEAUTIFUL SOUP GITHUB WEBSCRAPER HOW TO

#> \nReturn of the Jedi\n\n\nReleased: 1983. Learn how to leverage Python's amazing tools to scrape data from other websites. #> \nThe Empire Strikes Back\n\n\nReleased. #> \nRevenge of the Sith\n\n\nReleased: 200. #> \nAttack of the Clones\n\n\nReleased: 20. Check json file.Library ( rvest ) # Start by reading a HTML page with read_html(): starwars corresponds # to a different film films % html_elements ( "section" ) films #> #> \nThe Phantom Menace\n\n\nReleased: 1999. With open(‘house_listing_data.html’, ‘wb’) as file: Json.dump(property_details, outfile, indent=4) With open(‘house_listing_data.json’, ‘w’) as outfile: Property_details = json_data property_details = json_data break Property_details = json_data property_details = json_data property_details = json_data property_details = json_data property_details = json_data property_details = json_data if i = 1: Property_details = ()įor (i, script) in enumerate(soup.findAll(‘script’, So how will you fetch this data from say 1000 product pages? Well, you will get the HTML data for each page, and fetch the data point in this manner- for spans in soup.findAll(‘span’, attrs=): Now you downloaded a single HTML product page from that website and realised that each page has the product name mentioned on an element of type span having id as productTitle. Say you need to fetch a data-point called the product title, that is present in every page of an eCommerce website. Once you have the bs object, called soup_obj, traversing it is very easy, and since traversing it is straightforward enough, data-extraction also becomes simple. Where html_obj is the HTML data, the soup_obj is the bs object that has been obtained and the “html.parser” is the parser that was used to do the conversion. Once you have extracted the HTML content of a web-page and stored it in a variable, say html_obj, you can then convert it into a BeautifulSoup object with just one line of code- soup_obj = BeautifulSoup(html_obj, ‘html.parser’)

BEAUTIFUL SOUP GITHUB WEBSCRAPER CODE

How does BeautifulSoup work?īefore you go on to write code in Python, you have to understand how BeautifulSoup works. In general, the library is used to extract data points from XML and HTML documents. This library does not specifically scrape data from the internet, but in case you can get the HTML file of a webpage, it can help extract specific data points from it. When it comes to web scraping, one of the most commonly used libraries is BeautifulSoup. Some common examples are- for image processing or computer vision, we use OpenCV, for machine learning, we use TensorFlow and for plotting graphs, we use MatplotLib. These packages can be used for multiple functionalities, which may be difficult to perform with core Python. Besides being one of the easiest languages to learn due to its gentler learning curve, it also has the advantage of being a language with massive developer support- which has led to numerous third party packages. One of the most popular among these is Python. When it comes to web scraping, some programming languages are preferred over others. Introduction To Web Scraping With Python: